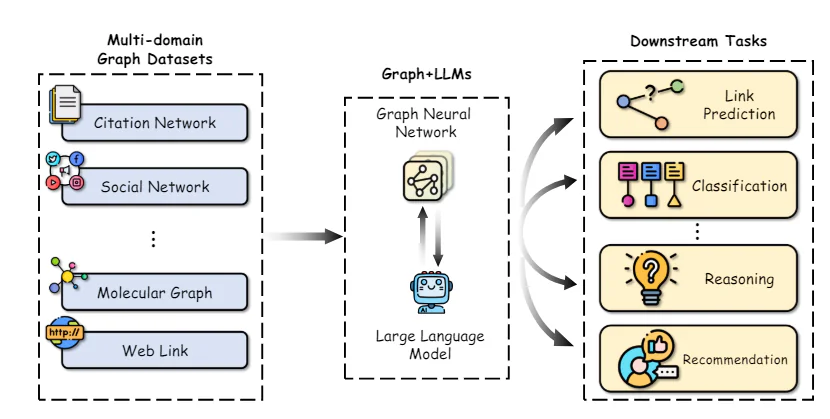

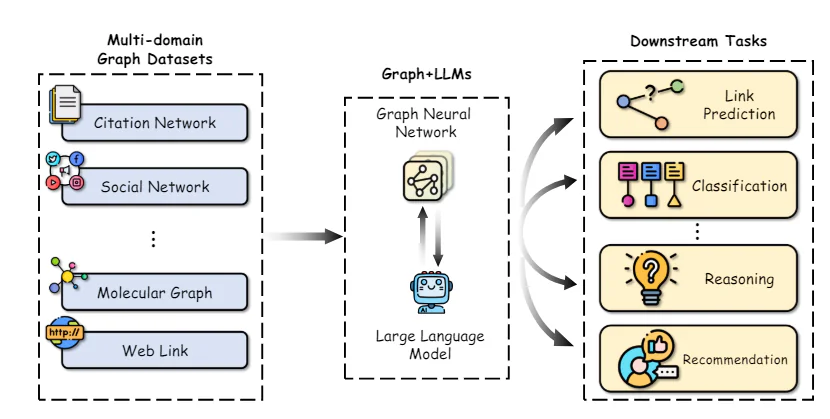

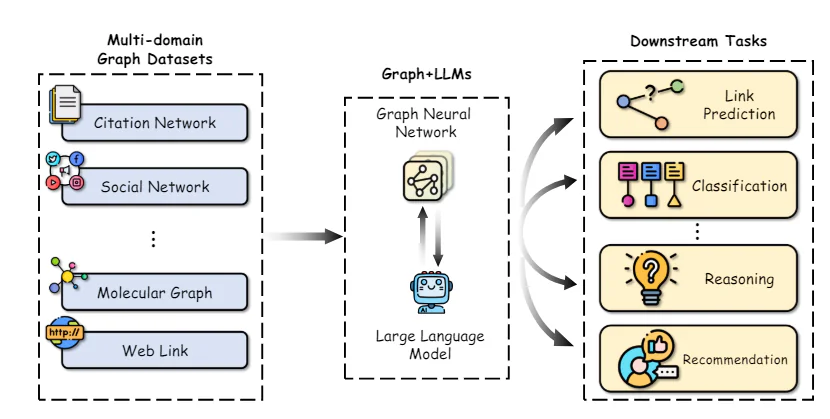

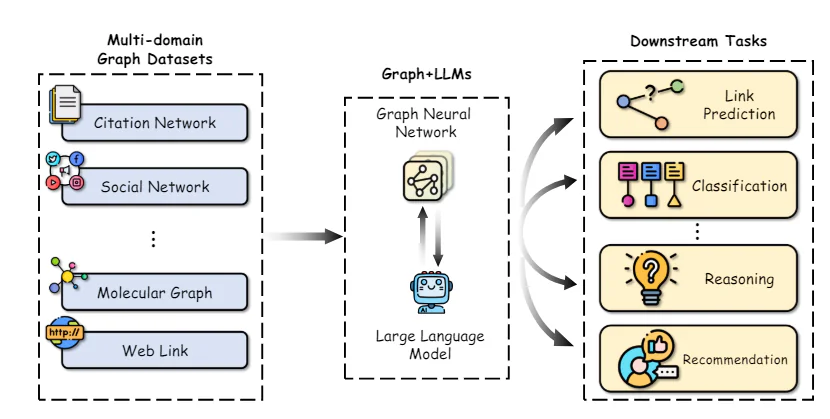

Graphs are data structures that represent complex relationships across a wide range of domains, including social networks, knowledge bases, biological systems, and many more. In these graphs, entities are represented as nodes, and their relationships are depicted as edges.

The ability to effectively represent and reason about these intricate relational structures is crucial for enabling advancements in fields like network science, cheminformatics, and recommender systems.

Graph Neural Networks (GNNs) have emerged as a powerful deep learning framework for graph machine learning tasks. By incorporating the graph topology into the neural network architecture through neighborhood aggregation or graph convolutions, GNNs can learn low-dimensional vector representations that encode both the node features and their structural roles. This allows GNNs to achieve state-of-the-art performance on tasks such as node classification, link prediction, and graph classification across diverse application areas.

While GNNs have driven substantial progress, some key challenges remain. Obtaining high-quality labeled data for training supervised GNN models can be expensive and time-consuming. Additionally, GNNs can struggle with heterogeneous graph structures and situations where the graph distribution at test time differs significantly from the training data (out-of-distribution generalization).

In parallel, Large Language Models (LLMs) like GPT-4, and LLaMA have taken the world by storm with their incredible natural language understanding and generation capabilities. Trained on massive text corpora with billions of parameters, LLMs exhibit remarkable few-shot learning abilities, generalization across tasks, and commonsense reasoning skills that were once thought to be extremely challenging for AI systems.

The tremendous success of LLMs has catalyzed explorations into leveraging their power for graph machine learning tasks. On one hand, the knowledge and reasoning capabilities of LLMs present opportunities to enhance traditional GNN models. Conversely, the structured representations and factual knowledge inherent in graphs could be instrumental in addressing some key limitations of LLMs, such as hallucinations and lack of interpretability.

In this article, we will delve into the latest research at the intersection of graph machine learning and large language models. We will explore how LLMs can be used to enhance various aspects of graph ML, review approaches to incorporate graph knowledge into LLMs, and discuss emerging applications and future directions for this exciting field.

Graph Neural Networks and Self-Supervised Learning

To provide the necessary context, we will first briefly review the core concepts and methods in graph neural networks and self-supervised graph representation learning.

Graph Neural Network Architectures

Graph Neural Network Architecture – source

The key distinction between traditional deep neural networks and GNNs lies in their ability to operate directly on graph-structured data. GNNs follow a neighborhood aggregation scheme, where each node aggregates feature vectors from its neighbors to compute its own representation.

Numerous GNN architectures have been proposed with different instantiations of the message and update functions, such as Graph Convolutional Networks (GCNs), GraphSAGE, Graph Attention Networks (GATs), and Graph Isomorphism Networks (GINs) among others.

More recently, graph transformers have gained popularity by adapting the self-attention mechanism from natural language transformers to operate on graph-structured data. Some examples include GraphormerTransformer, and GraphFormers. These models are able to capture long-range dependencies across the graph better than purely neighborhood-based GNNs.

Self-Supervised Learning on Graphs

While GNNs are powerful representational models, their performance is often bottlenecked by the lack of large labeled datasets required for supervised training. Self-supervised learning has emerged as a promising paradigm to pre-train GNNs on unlabeled graph data by leveraging pretext tasks that only require the intrinsic graph structure and node features.

Self-Supervised Graph

Some common pretext tasks used for self-supervised GNN pre-training include:

- Node Property Prediction: Randomly masking or corrupting a portion of the node attributes/features and tasking the GNN to reconstruct them.

- Edge/Link Prediction: Learning to predict whether an edge exists between a pair of nodes, often based on random edge masking.

- Contrastive Learning: Maximizing similarities between graph views of the same graph sample while pushing apart views from different graphs.

- Mutual Information Maximization: Maximizing the mutual information between local node representations and a target representation like the global graph embedding.

Pretext tasks like these allow the GNN to extract meaningful structural and semantic patterns from the unlabeled graph data during pre-training. The pre-trained GNN can then be fine-tuned on relatively small labeled subsets to excel at various downstream tasks like node classification, link prediction, and graph classification.

By leveraging self-supervision, GNNs pre-trained on large unlabeled datasets exhibit better generalization, robustness to distribution shifts, and efficiency compared to training from scratch. However, some key limitations of traditional GNN-based self-supervised methods remain, which we will explore leveraging LLMs to address next.

Enhancing Graph ML with Large Language Models

Integration of Graphs and LLM – source

The remarkable capabilities of LLMs in understanding natural language, reasoning, and few-shot learning present opportunities to enhance multiple aspects of graph machine learning pipelines. We explore some key research directions in this space:

A key challenge in applying GNNs is obtaining high-quality feature representations for nodes and edges, especially when they contain rich textual attributes like descriptions, titles, or abstracts. Traditionally, simple bag-of-words or pre-trained word embedding models have been used, which often fail to capture the nuanced semantics.

Recent works have demonstrated the power of leveraging large language models as text encoders to construct better node/edge feature representations before passing them to the GNN. For example, Chen et al. utilize LLMs like GPT-3 to encode textual node attributes, showing significant performance gains over traditional word embeddings on node classification tasks.

Beyond better text encoders, LLMs can be used to generate augmented information from the original text attributes in a semi-supervised manner. TAPE generates potential labels/explanations for nodes using an LLM and uses these as additional augmented features. KEA extracts terms from text attributes using an LLM and obtains detailed descriptions for these terms to augment features.

By improving the quality and expressiveness of input features, LLMs can impart their superior natural language understanding capabilities to GNNs, boosting performance on downstream tasks.

Alleviating Reliance on Labeled Data

A key advantage of LLMs is their ability to perform reasonably well on new tasks with little to no labeled data, thanks to their pre-training on vast text corpora. This few-shot learning capability can be leveraged to alleviate the reliance of GNNs on large labeled datasets.

One approach is to use LLMs to directly make predictions on graph tasks by describing the graph structure and node information in natural language prompts. Methods like InstructGLM and GPT4Graph fine-tune LLMs like LLaMA and GPT-4 using carefully designed prompts that incorporate graph topology details like node connections, neighborhoods etc. The tuned LLMs can then generate predictions for tasks like node classification and link prediction in a zero-shot manner during inference.

While using LLMs as black-box predictors has shown promise, their performance degrades for more complex graph tasks where explicit modeling of the structure is beneficial. Some approaches thus use LLMs in conjunction with GNNs – the GNN encodes the graph structure while the LLM provides enhanced semantic understanding of nodes from their text descriptions.

Graph Understanding with LLM Framework – Source

GraphLLM explores two strategies: 1) LLMs-as-Enhancers where LLMs encode text node attributes before passing to the GNN, and 2) LLMs-as-Predictors where the LLM takes the GNN’s intermediate representations as input to make final predictions.

GLEM goes further by proposing a variational EM algorithm that alternates between updating the LLM and GNN components for mutual enhancement.

By reducing reliance on labeled data through few-shot capabilities and semi-supervised augmentation, LLM-enhanced graph learning methods can unlock new applications and improve data efficiency.

Enhancing LLMs with Graphs

While LLMs have been tremendously successful, they still suffer from key limitations like hallucinations (generating non-factual statements), lack of interpretability in their reasoning process, and inability to maintain consistent factual knowledge.

Graphs, especially knowledge graphs which represent structured factual information from reliable sources, present promising avenues to address these shortcomings. We explore some emerging approaches in this direction:

Knowledge Graph Enhanced LLM Pre-training

Similar to how LLMs are pre-trained on large text corpora, recent works have explored pre-training them on knowledge graphs to imbue better factual awareness and reasoning capabilities.

Some approaches modify the input data by simply concatenating or aligning factual KG triples with natural language text during pre-training. E-BERT aligns KG entity vectors with BERT’s wordpiece embeddings, while K-BERT constructs trees containing the original sentence and relevant KG triples.

The Role of LLMs in Graph Machine Learning:

Researchers have explored several ways to integrate LLMs into the graph learning pipeline, each with its unique advantages and applications. Here are some of the prominent roles LLMs can play:

- LLM as an Enhancer: In this approach, LLMs are used to enrich the textual attributes associated with the nodes in a TAG. The LLM’s ability to generate explanations, knowledge entities, or pseudo-labels can augment the semantic information available to the GNN, leading to improved node representations and downstream task performance.

For example, the TAPE (Text Augmented Pre-trained Encoders) model leverages ChatGPT to generate explanations and pseudo-labels for citation network papers, which are then used to fine-tune a language model. The resulting embeddings are fed into a GNN for node classification and link prediction tasks, achieving state-of-the-art results.

- LLM as a Predictor: Rather than enhancing the input features, some approaches directly employ LLMs as the predictor component for graph-related tasks. This involves converting the graph structure into a textual representation that can be processed by the LLM, which then generates the desired output, such as node labels or graph-level predictions.

One notable example is the GPT4Graph model, which represents graphs using the Graph Modelling Language (GML) and leverages the powerful GPT-4 LLM for zero-shot graph reasoning tasks.

- GNN-LLM Alignment: Another line of research focuses on aligning the embedding spaces of GNNs and LLMs, allowing for a seamless integration of structural and semantic information. These approaches treat the GNN and LLM as separate modalities and employ techniques like contrastive learning or distillation to align their representations.

The MoleculeSTM model, for instance, uses a contrastive objective to align the embeddings of a GNN and an LLM, enabling the LLM to incorporate structural information from the GNN while the GNN benefits from the LLM’s semantic knowledge.

Challenges and Solutions

While the integration of LLMs and graph learning holds immense promise, several challenges need to be addressed:

- Efficiency and Scalability: LLMs are notoriously resource-intensive, often requiring billions of parameters and immense computational power for training and inference. This can be a significant bottleneck for deploying LLM-enhanced graph learning models in real-world applications, especially on resource-constrained devices.

One promising solution is knowledge distillation, where the knowledge from a large LLM (teacher model) is transferred to a smaller, more efficient GNN (student model).

- Data Leakage and Evaluation: LLMs are pre-trained on vast amounts of publicly available data, which may include test sets from common benchmark datasets, leading to potential data leakage and overestimated performance. Researchers have started collecting new datasets or sampling test data from time periods after the LLM’s training cut-off to mitigate this issue.

Additionally, establishing fair and comprehensive evaluation benchmarks for LLM-enhanced graph learning models is crucial to measure their true capabilities and enable meaningful comparisons.

- Transferability and Explainability: While LLMs excel at zero-shot and few-shot learning, their ability to transfer knowledge across diverse graph domains and structures remains an open challenge. Improving the transferability of these models is a critical research direction.

Furthermore, enhancing the explainability of LLM-based graph learning models is essential for building trust and enabling their adoption in high-stakes applications. Leveraging the inherent reasoning capabilities of LLMs through techniques like chain-of-thought prompting can contribute to improved explainability.

- Multimodal Integration: Graphs often contain more than just textual information, with nodes and edges potentially associated with various modalities, such as images, audio, or numeric data. Extending the integration of LLMs to these multimodal graph settings presents an exciting opportunity for future research.

Real-world Applications and Case Studies

The integration of LLMs and graph machine learning has already shown promising results in various real-world applications:

- Molecular Property Prediction: In the field of computational chemistry and drug discovery, LLMs have been employed to enhance the prediction of molecular properties by incorporating structural information from molecular graphs. The LLM4Mol model, for instance, leverages ChatGPT to generate explanations for SMILES (Simplified Molecular-Input Line-Entry System) representations of molecules, which are then used to improve the accuracy of property prediction tasks.

- Knowledge Graph Completion and Reasoning: Knowledge graphs are a special type of graph structure that represents real-world entities and their relationships. LLMs have been explored for tasks like knowledge graph completion and reasoning, where the graph structure and textual information (e.g., entity descriptions) need to be considered jointly.

- Recommender Systems: In the domain of recommender systems, graph structures are often used to represent user-item interactions, with nodes representing users and items, and edges denoting interactions or similarities. LLMs can be leveraged to enhance these graphs by generating user/item side information or reinforcing interaction edges.

Conclusion

The synergy between Large Language Models and Graph Machine Learning presents an exciting frontier in artificial intelligence research. By combining the structural inductive bias of GNNs with the powerful semantic understanding capabilities of LLMs, we can unlock new possibilities in graph learning tasks, particularly for text-attributed graphs.

While significant progress has been made, challenges remain in areas such as efficiency, scalability, transferability, and explainability. Techniques like knowledge distillation, fair evaluation benchmarks, and multimodal integration are paving the way for practical deployment of LLM-enhanced graph learning models in real-world applications.